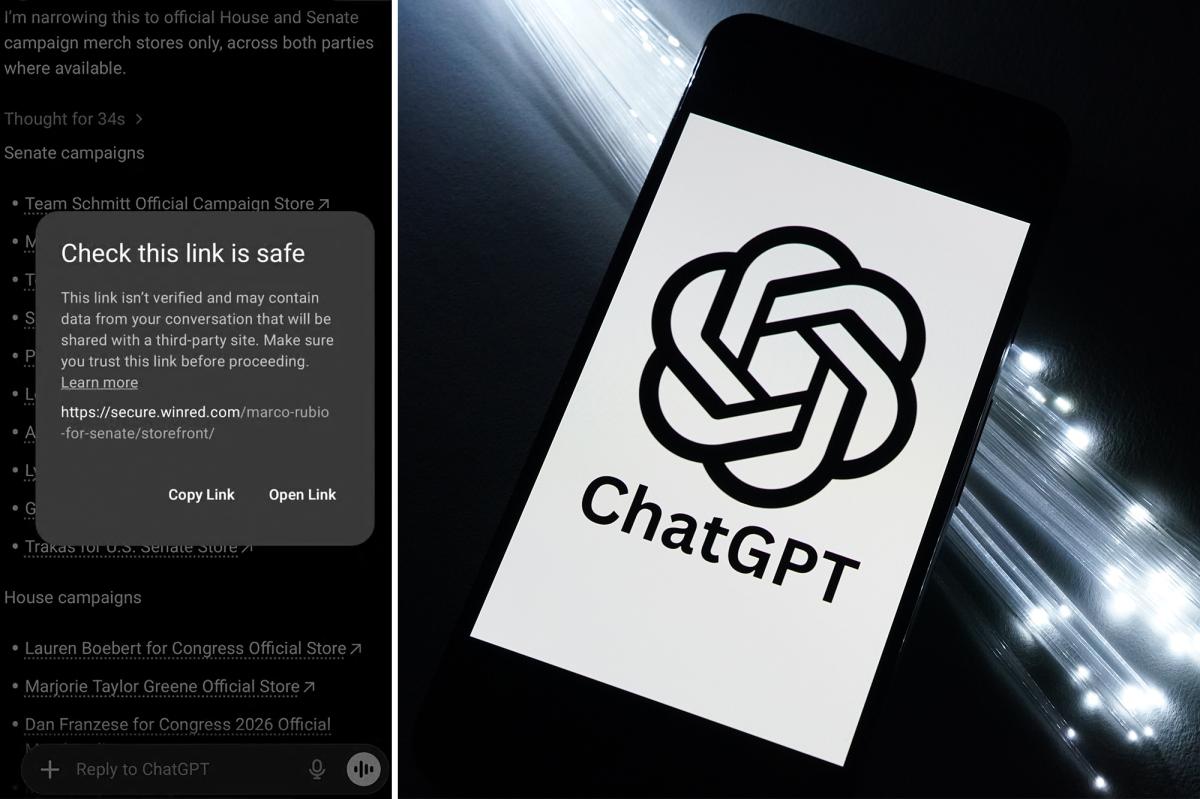

OpenAI’s recent technical anomaly, wherein ChatGPT inaccurately flagged links to the Republican Party’s WinRed donation platform as unsafe while exempting Democratic ActBlue links, underscores a critical vulnerability in AI-driven decision-making systems with geopolitical ramifications. While the incident appears rooted in algorithmic biases or search index gaps, it raises profound questions about the reliability of generative AI in environments where trust and neutrality are paramount—particularly for sovereign entities and private-sector platforms in the Middle East and North Africa (MENA). The disproportionate impact on a Republican-aligned platform could exacerbate concerns about AI’s role in democratic processes, a sensitive topic in regions where technology intersects with political stability. For MENA, this incident serves as a cautionary tale regarding the integration of AI into public infrastructure projects or financial systems reliant on algorithmic fairness. Sovereign capital in the region, which has increasingly allocated resources toward AI-driven economic transformation, must now prioritize rigorous auditing of such tools to avoid unintended biases that could undermine public trust or distort policy outcomes.

The implications for venture capital in MENA are equally significant. Startups operating in fintech, e-commerce, or political technology sectors within the region often depend on global AI platforms to optimize operations or enhance user engagement. A technical glitch with electoral or financial bias, as seen here, could deter investor confidence, particularly among institutional backers wary of regulatory or reputational risks. Venture capital in countries like the UAE or Saudi Arabia, which have aggressively courted AI innovation, may face challenges in scaling AI-adjacent ventures if end-users or regulators perceive algorithmic tools as politically skewed. Furthermore, this event highlights the need for MENA-based VC funds to co-develop localized AI frameworks that align with regional governance norms and mitigate cultural or political sensitivities—factors that could otherwise stall partnerships or portfolios in politically sensitive sectors.

At the infrastructural level, the incident exposes gaps in regional digital ecosystems’ resilience to AI-driven misinformation or bias. MENA’s push toward digital sovereignty—evident in initiatives like Saudi Arabia’s Vision 2030 or Egypt’s tech hubs—must account for the ethical frameworks governing AI tools deployed across critical sectors, from healthcare to finance. The uneven flagging of political links by ChatGPT mirrors broader risks where algorithmic systems inadvertently amplify polarization or manipulate information flows, a danger magnified in regions with complex socio-political dynamics. For sovereign policymakers, the fallout could entail increased scrutiny of foreign AI tools operating within their jurisdictions, potentially accelerating efforts to develop homegrown alternatives. Meanwhile, regional infrastructure providers must embed AI ethics into their technological roadmaps, ensuring that generative tools are not only functional but also impartial. Without such safeguards, MENA risks falling behind in leveraging AI for economic growth, as businesses and governments prioritize platforms demonstrating reliability and neutrality in an era of heightened geopolitical scrutiny.